Facebook Founder and CEO Mark Zuckerberg

Earlier this week several international and national news agencies reported that Facebook 'shut down' or 'unplugged' its Artificial Intelligence 'system' after it discovered bots started speaking to each other in a 'non-human' language. The headlines implied an immediate shutdown as things went out of control sparking fears of the beginnings of a dystopian robot uprising against humans.

The story snowballed and morphed into something else as some news outlets incorrectly reported that Facebook had shut down the program whereas Facebook's researchers had only reprogrammed it. The stories also did not provide adequate context that bots inventing their own language was not a new phenomenon and that the incident was part a research project and not an actual feature rolled out to Facebook users.

Curiously, Facebook has been tight-lipped and not issued a statement to quell the news reports.

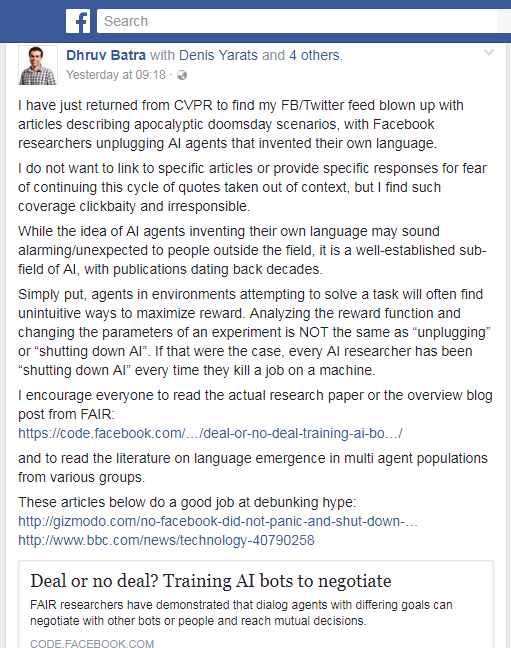

But the media coverage prompted one of Facebook Artificial Intelligence Research (FAIR) project's key researchers to slam news reports through a post on the social networking site on July 31.

Dhruv Batra, visiting research scientist at Facebook AI Research, in a scathing post called the coverage as ‘clickbaity and irresponsible’ for not drawing a difference between ‘changing parameters of an experiment’ and ‘unplugging / shutting down AI’.

Batra also wrote that while AI agents inventing their own language may sound alarming to people outside the field, it was a well established sub-field of AI.

An email to Batra from BOOM with additional questions went unanswered.

We try to answer the questions around this incident and piece a timeline to explain how alarmist narratives in the media were created.

What was the research about?

Facebook AI Research (FAIR) studied the possibility of enabling chat bots to ‘reason, converse and negotiate’. Chat bots are computer programs that can simulate human conversations using Artificial Intelligence.

The chatbot ‘dialogue agents’ were put at the task of dividing between themselves a set of items such as 2 books, 1 hat and 3 balls through negotiation. As in a real scenario of human negotiation, each of these items is of different value to both the agents (with all the items adding up to the value of 10 for each agent) which is unknown to the other. To ensure competitive negotiation, the exercise is reward based. If a deal is not struck after 10 rounds of dialogue between the agents, they are allotted 0 points.

The major innovation by FAIR was to develop ‘dialogue rollouts’ which mean the agents 'think ahead' and create mental models of possible dialogues till the end and choose ones that would succeed in striking deals. This model of prediction through rollout of possible dialogues saw the bots emerging with certain negotiation strategies.

The agents when pitched against humans tend to negotiate harder and longer, they learned the skill of deceit by feigning interest in a non-valuable item and later ‘compromising’ it for the item which they valued and also generating new sentences outside the ones from training data.

How Facebook AI bots became Terminators: A Timeline

June 14: Facebook published a blog post - Deal or no deal? Training AI bots to negotiate with an overview of their research on machine learning. This was followed by a research paper published on June 16.

July 14: Technology website 'Co-design' published a story, 'AI is Inventing Languages Humans Can’t Understand. Should We Stop It?’ The article, which questioned whether researchers should allow AI agents to develop their own language, included quotes from FAIR researchers Mike Lewis and Dhruv Batra. It also included a conversation between two chat bots, Bob and Alice, which found place in many other articles on technology websites subsequently.

Bob: “I can can I I everything else.”

Alice: “Balls have zero to me to me to me to me to me to me to me to me to.”

The article explained how a 'programming mistake' led the bots trained to speak in English started using English words in a non-sensible manner.

FAIR team member Mark Lewis was quoted to have said, “Our interest was having bots who could talk to people’.

Thus, Co-design's article said that, ‘Facebook ultimately opted to require its negotiation bots to speak in plain old English’.

BOOM contacted Mark Wilson, the author of the Co-Design article who also agrees that Facebook had only reprogrammed the bots and not shut it.

[blockquote width='100']

"My understanding is that it was reprogrammed to speak in English. Do you shut it down for a moment to do so? I mean, probably? But here's a point where literalism is difficult." - Mark Wilson told BOOM.

[/blockquote]

This is in line with what FAIR researcher Batra has clarified through his Facebook post suggesting the bots were not shut down but were reprogrammed during the course of the research to speak only in English.

On July 15: Tesla Inc founder and Chief Executive Elon Musk, a skeptical backer of AI research said in a conference, ‘AI is a fundamental existential risk for human civilization’ and ‘I think by the time we are reactive in AI regulation, it's too late. (Elon Musk Warns Governors: Artificial Intelligence Poses 'Existential Risk')

On July 24 : Facebook founder and Chief Executive Mark Zuckerburg during a Facebook Live responded to a question on Musk’s statement by saying, "I think people who are naysayers and try to drum up these doomsday scenarios – I just, I don’t understand it. It’s really negative and in some ways I actually think it is pretty irresponsible. ….. In the next five to ten years, AI is going to deliver so many improvements in the quality of our lives."

On July 25: Musk in a reply hit back at Zuckerburg calling his knowledge about AI limited.

July 30 to August 01: Several news agencies reported that Facebook had shut AI as their bots started speaking in a non-human language.

International Business Times reported AI invents its own language: Did we humans just create Frankenstein?

Independent said Facebook’s Artificial Intelligence Robots shut down after they start talking to each other in their own language.

Forbes said Facebook AI Creates Its Own Language In Creepy Preview Of Our Potential Future

Not to be left behind, the Indian media also reported the story. Most Indian news agencies picked the news from wire agency IANS which cited a July 30 report of Tech Times who in turn have linked it to a July 29 story of Inquisitr. However, Inquisitr along with several other websites have selectively used the divergent bot conversation from the July 14 Co-Design article to weave the story of how this resonates the Skynet scenario from The Terminator. (Click here for Indian media coverage - NDTV and HT)

The IANS's account incorrectly said the incident took place just days after a verbal spat between Facebook CEO and Musk who exchanged harsh words over a debate on the future of AI. This account which was the reference point for several Indian news agencies also got the sequence of events wrong.

Bots chatting in own language?: Who else is working on this?

Interestingly, Elon Musk who had a spat with Mark Zuckerberg also has a team of researchers working at his artificial intelligence lab Open AI, exploring a new path to machines that can not only converse with humans, but with each other, reported technology website Wired on March 16 this year.

Wired profiles Igor Mordatch, a visiting researcher at Tesla's Open AI who is building virtual worlds where software bots learn to create their own language out of necessity.

The research report Wired is referring to was released on March 15 (Click here). In this report, Mordatch along with his co-author Pieter Abbeel have this conclusion to write

[blockquote width='100']

In the future, we would like to experiment with larger number of actions that necessitate more complex syntax and larger vocabularies. We would also like integrate exposure to human language to form communication strategies that are compatible with human use.

[/blockquote]

We haven't heard the last on this issue. With bots already making its presence felt in the real world, there is no stopping research happening in the field that would widen the application of AI in our daily lives. And that may involve bots talking to each other in a language of their own but something humans can understand and partner with.